In the last article of the Extract Transform and Load (ETL) series, we talked about how using a canonical object can decouple data sources from the downstream components, making the pipeline reusable and easily maintained. In this article we will discuss one of these downstream components, the business rules.

Business rules are one of the most important pieces of the pipeline. They ensure that the data submitted meets the business’s standards and use cases. Without business rules, any bad data, despite passing format validations, may persist and cause a bad state within the company’s data store. There are countlessly COTS and FOSS products available for implementation. These products offer a variety of pro and cons, which we will not discuss in the article. In most scenarios, hand-built business rules are sufficient and easily implemented. In this article I will talk about two approaches to implementing those business rules and the pros and cons of each. The two approaches are Hierarchical and Autonomous.

Hierarchical Business Rules

The idea of Hierarchical Business Rules is one where an object graph is validated top-down. In this system each validation or rule is reliant on the previous rule. These systems execute in order of dependency. For example, if a list of objects must have a unique identifier for each object. A dependency on checking object uniqueness is that each object has an identifier specified. In a hierarchical Business Rules efficiency is increased by checking each object for the identifier while building the unique list.

Pros

- Runs quickly

- Can have identification and data manipulation as part of execution

- There is a known order

- Multiple validations can occur at once

- Typically efficient

Cons

- May be more complicated to test

- Is not modular

- Is not decoupled from other rules

- The rule chain may exit before all the rules are checked

Autonomous Business Rules

When each business rule has a rule instance, the business rule is Autonomous. Typically, this means that each rule does not depend on another rule being run. If you have the case where one rule checks for the presence of an element or object and then another cross references that field or object, you will need to handle the case where that field or object is not present in order for this to be Autonomous. These rules are often the easiest to maintain and test as each rule is decoupled from the others. However, they have the potential to be costly to run, like in the case where there is a large object graph and each rule needs to scan the entirety each time.

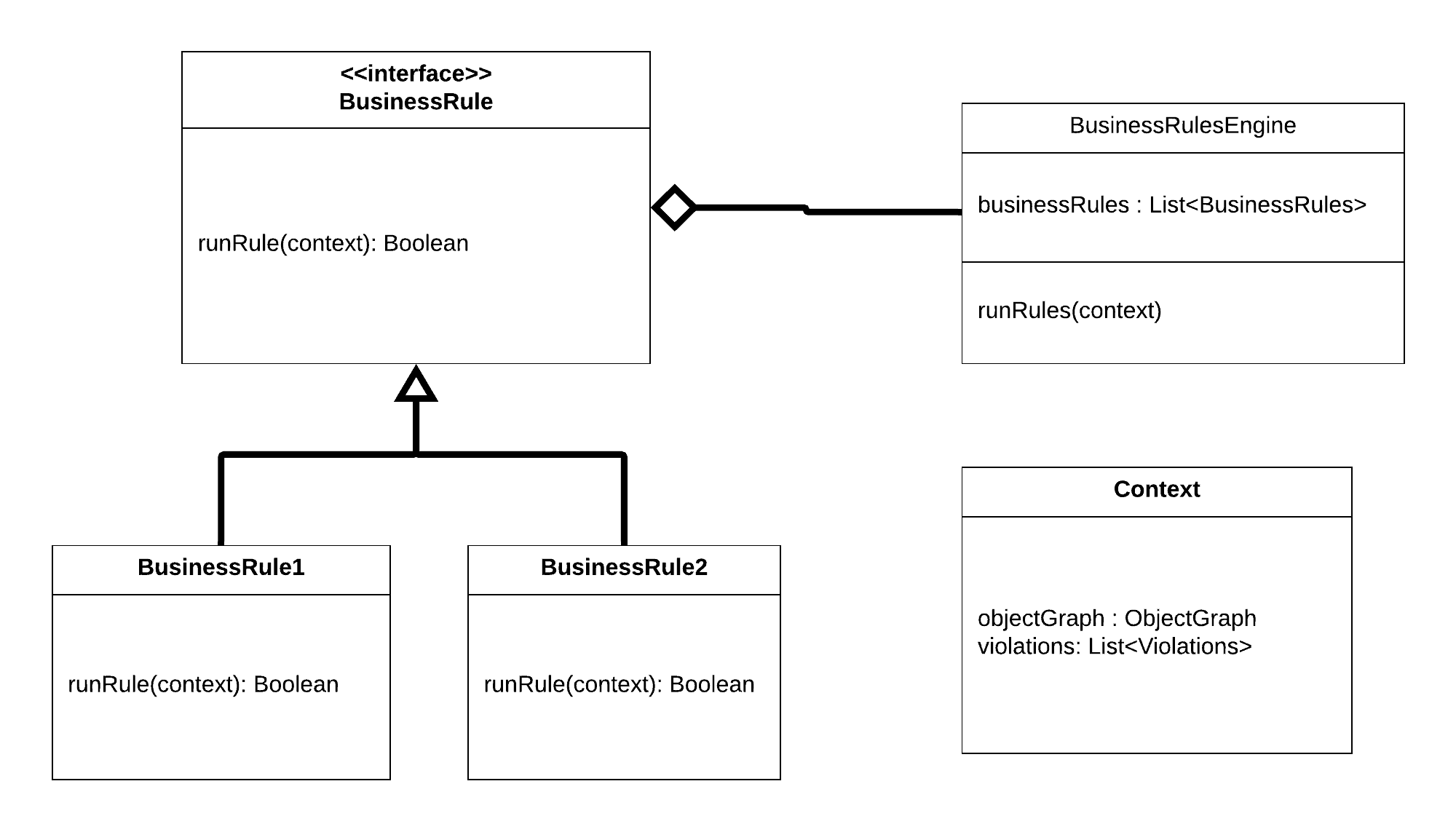

A typical implementation uses an interface to define the execution method and executes on a context. Concrete rules extend the interface and are instantiated and maintained in a list. The rules list is executed by the BusinessRulesEngine. The context, which is passed into each rule, should contain the object graph and a list of violations. The violations list is populated by each business rule. After all the rules are executed, the system can decide what to do with the violation information. The class diagram below describes this situation.

Pros

- Rules are decoupled from one another

- Can get a full set of violations for each run

- Easy to test

- Easy to maintain

- Depending on implementation, there may be no guarantee to the order of execution

Cons

- May not be efficient to run as set up tasks may need to be ran each rule.

- More null protection is needed

To increase efficiency of autonomous business rules, like checks can be implemented in the same rule. A good example of this is where an entire object is checked in the rule. For example, one rule could be written to check all the require fields for a specific object type.

Conclusion

Business rules are an important part of every ETL pipeline and should not be skipped. The whole point of an ETL system is to capture data. Down-stream systems are only as good as the data quality. Business Rules ensure that the data quality remains high.

In the next ETL article we will talk about identification and persistence of data.